ELM3: Contrafactives and Interdisciplinary Work

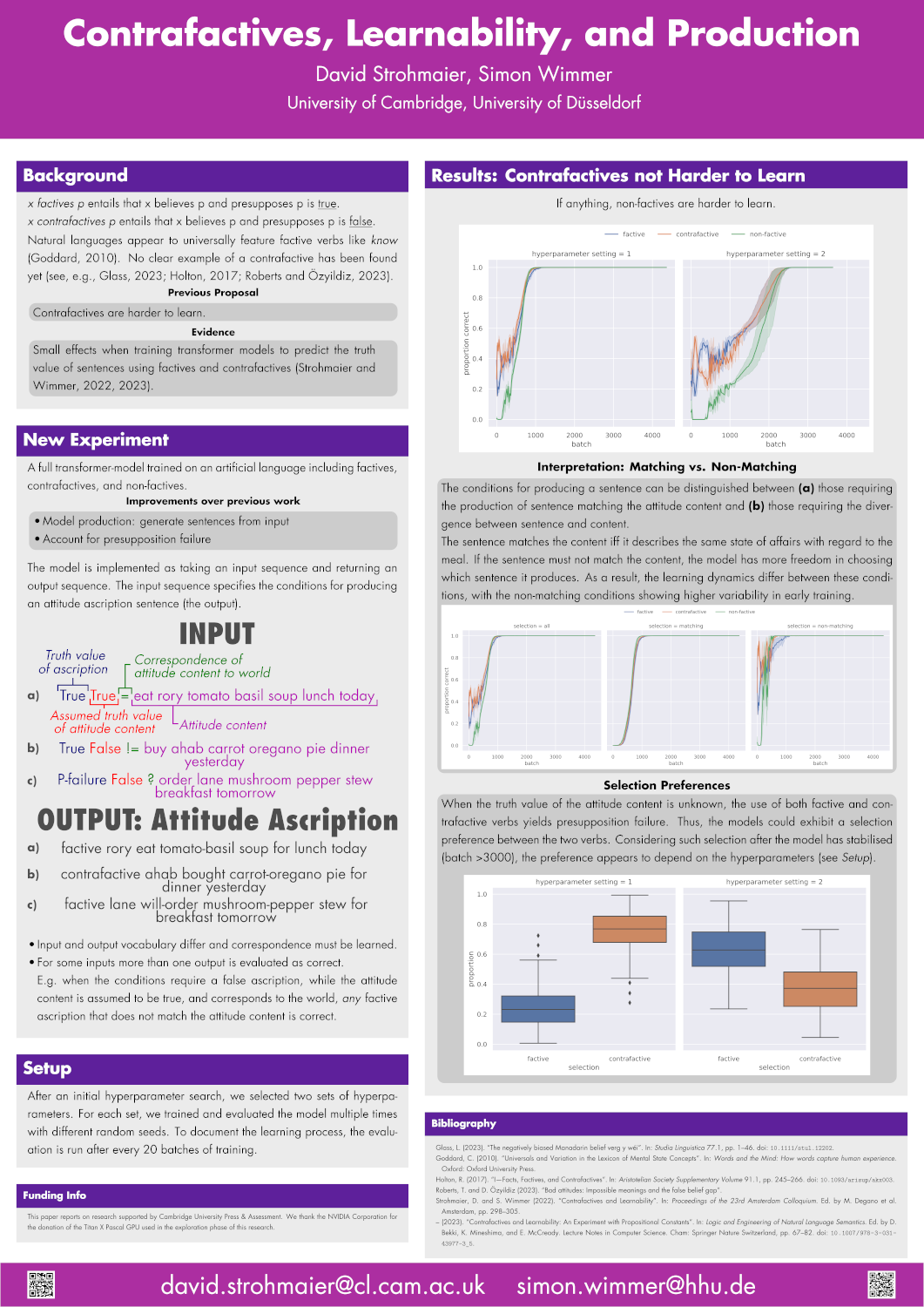

Last week, I had the pleasure to present a poster at the third conference on Experiments in Linguistic Meaning (ELM3). My poster presented the latest collaborative work with Simon Wimmer on the topic of contrafactives. Click here to get the poster as a PDF.1 A paper fill follow.

Walking interested conference participants through the poster, I liked to start as follows:

We are investigating contrafactives, a type of verb that does not exist.

I used the paradoxical statements hook, but of course it twists the matter a little: Simon and I are interested in the non-existence of contrafactives. Why are contrafactives — verbs that attribute a propositional attitude and presuppose the falsehood of the attitude’s content — not lexicalised in any language?2 Why are factives such as “know” (near?) universal, while contrafactives are entirely absent from the lexicon? Why is there no word that has the meaning of “falsely believe”?

Simon and I had proposed that contrafactives might be harder to learn and found some limited evidence for it in two previous papers considering comprehension. This time we looked at generation and didn’t find any evidence to that effect at all. What does that tell us about the previous two papers? I don’t know. The previous results might have been flukes, despite the statistical significance of the results. Or there could be an asymmetry between comprehension and production. We hope that future research can solve our puzzlement.

Observations on ELM3 and Interdisciplinarity

The conference was interdisciplinary, combining linguistics and cognitive science, with a pinch of philosophy added for flavour. Many of the methods were computational: Computer-based corpus linguistics, Bayesian inference, etc. Nonetheless, I stood out as a computer scientist because these days working in NLP is almost a proper subset of working on neural network models. I was a little surprised to that find my post was one of very few pieces of research using transformer models.3

With all the publicity for LLMs these days, it is almost encouraging to see that other work continues. Given all the resources being committed to LLMs and the like, I’m worried about an excessive concentration of research capital. ELM3 assuaged these fears somewhat, although chatting with PhDs and postdocs about the lack of job openings in formal semantics reminded me of the limitations of a field without direct industry application — philosophy PhDs are familiar with the situation.

I don’t mind having been the only researcher at the conference to train or fine-tune a transformer for their work. That being said, I’m a bit puzzled by how little impact the relative success of neural models had on the cognitive science aspect of ELM3. I heard more about LoT than connectionism. Does the success of neural methods in NLP not support a connectionist approach to cognition? The approach might be incorrect, but some more discussion might have been in place. What do we have to rethink about semantics if transformer models capture at least some aspects of it?

Footnotes

-

I corrected a typo after the conference. ↩

-

At least so far no one has been able to produce an example. ↩

-

I recall seeing one poster by Karl Mulligan and Kyle Rawlins using BERTScores. ↩

| Previous | Next |

| A Debate about Words | Bob Stern (1962-2024) |